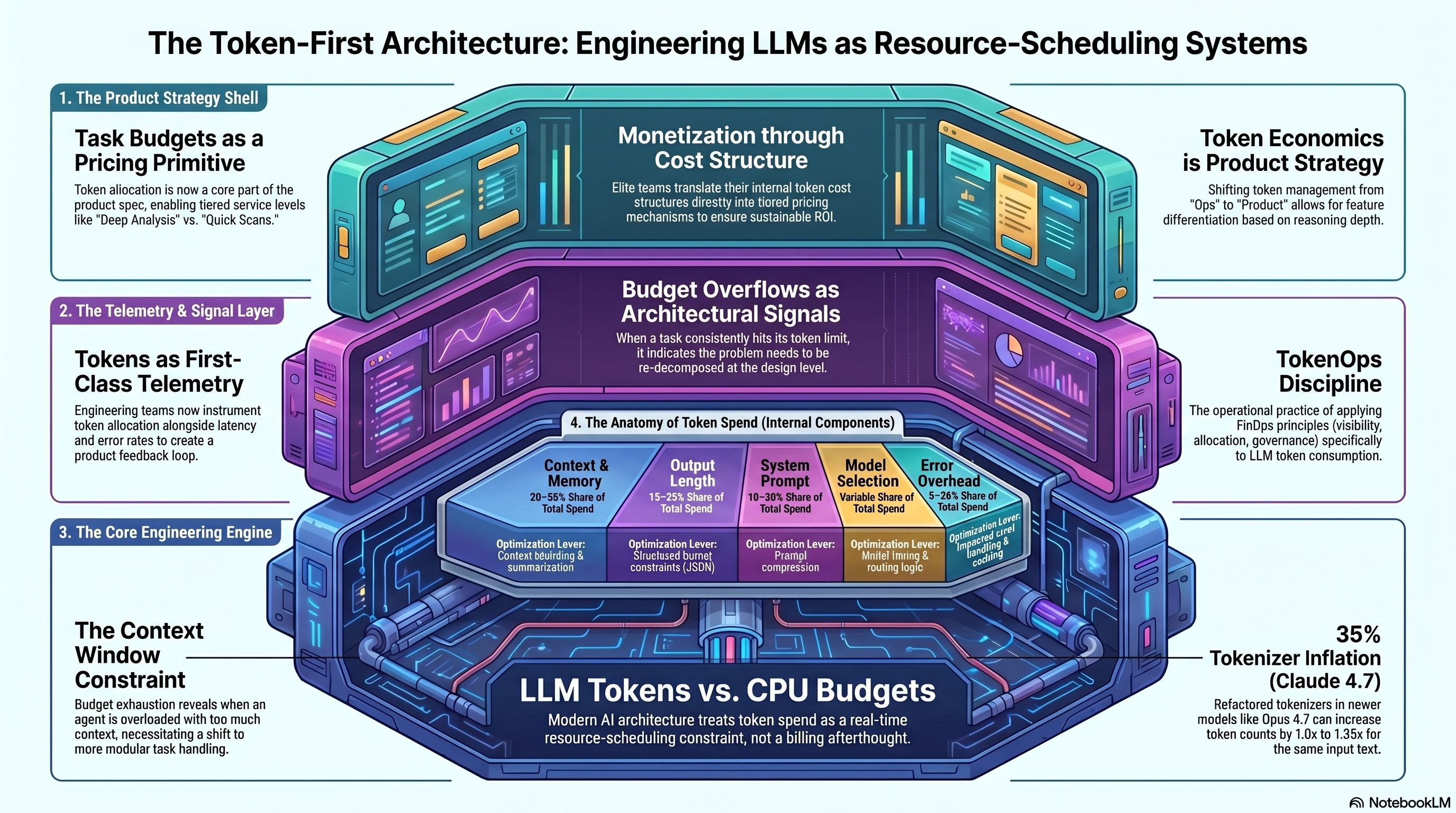

For most engineering teams, LLM token spend has been a billing line item. That era is ending.

With task budgets entering public beta on the Claude Platform API this week, developers now have API-level primitives to cap or guide total token expenditure across a given run or sub-task. On the surface, that sounds like cost control. Underneath it, it is something structurally more important: a new dimension of product architecture — and a largely overlooked monetization primitive.

The Architectural Signal

When a task consistently exhausts its budget before resolution, the conventional response is to increase the limit. The 1% move is to treat the overflow as an architectural signal. The model is not failing — your task decomposition is. The agent is being asked to hold too much context in a single span.

In Field Notes 003, I wrote about tokenmaxxing — the pathology of maximizing token consumption to signal productivity to leadership. Task budgets are the structural antidote to that problem. They force the question that tokenmaxxing avoids: not how many tokens did we use, but how many did we actually need.

Elite engineering teams will start instrumenting task budget allocations as first-class telemetry alongside latency and error rates. Budget overflow events become a product feedback loop — a structured prompt to re-decompose problems at the design level before they become cost problems at the operations level. This is directly analogous to CPU budget management in real-time systems. Engineers who internalize this framing will design fundamentally different — and more maintainable — agentic architectures than those who treat budgets as a cost dial.

There is an additional layer teams in production need to account for now. Refactored tokenizers in newer models like Opus 4.7 can increase token counts by 1.0× to 1.35× for the same input text. If your task budgets were calibrated against an earlier Claude version, you may be 35% underallocated before a single line of your application changes. Build headroom into your task specs, and revalidate your budgets whenever you upgrade model versions. This is not a footnote in a changelog — it is a design input that belongs in your architecture review.

The Monetization Story

Task budgets encode how much reasoning a problem deserves. That is a pricing primitive.

The differentiation is not arbitrary. It is grounded in the actual computational depth of the reasoning your product delivers. Customers are not paying for API calls — they are paying for reasoning value delivered. Task budgets let you make that value visible and billable.

The math is straightforward once you decide to run it. A quick scan task at 5,000 tokens costs roughly $0.015 in compute at current Sonnet pricing ($3 per million tokens). A deep analysis task at 50,000 tokens costs roughly $0.15. Price the latter at $0.50 per run and you are at 3.3× coverage on compute cost — the same three-times-cost threshold from Field Notes 002. Task budgets give you the mechanism to enforce that ratio at runtime, not just in a financial model. They are what makes tiered pricing structurally honest.

Most teams building on agentic infrastructure today are pricing by seat or by volume. The teams who figure out token economics as a product architecture decision will find a pricing model that scales naturally with the value they deliver.

What To Do This Week

Add task budgets to your next architecture review — not your billing review. Identify which tasks in your product carry the most reasoning weight. Those are your deep analysis candidates. Everything else is a quick scan. Start designing around that constraint.

The teams who get this right in the next six months will set the patterns everyone else copies in 2027.

Token economics isn’t ops. It’s product strategy.